agirouter

.solutions

Agirouter AI is the best end-to-end platform for developing your AI applications – no matter your starting point. Let’s build Agirouter.

Contact usWhat we offer

Agirouter AI offers cutting-edge products to power AI for your application.

We’ll make quick work of solving problems Agirouter and deploy at the scale of your enterprise.

fastest performance, effortless horizontal scalability, easy-to-use developer tools

unmatched performance

Built to scale with you

designed for rapid integration

world-class support

collaborate

SPEED RELATIVE TO VLLM

LLAMA-3 8B AT FULL PRECISION

COST RELATIVE TO GPT-4o

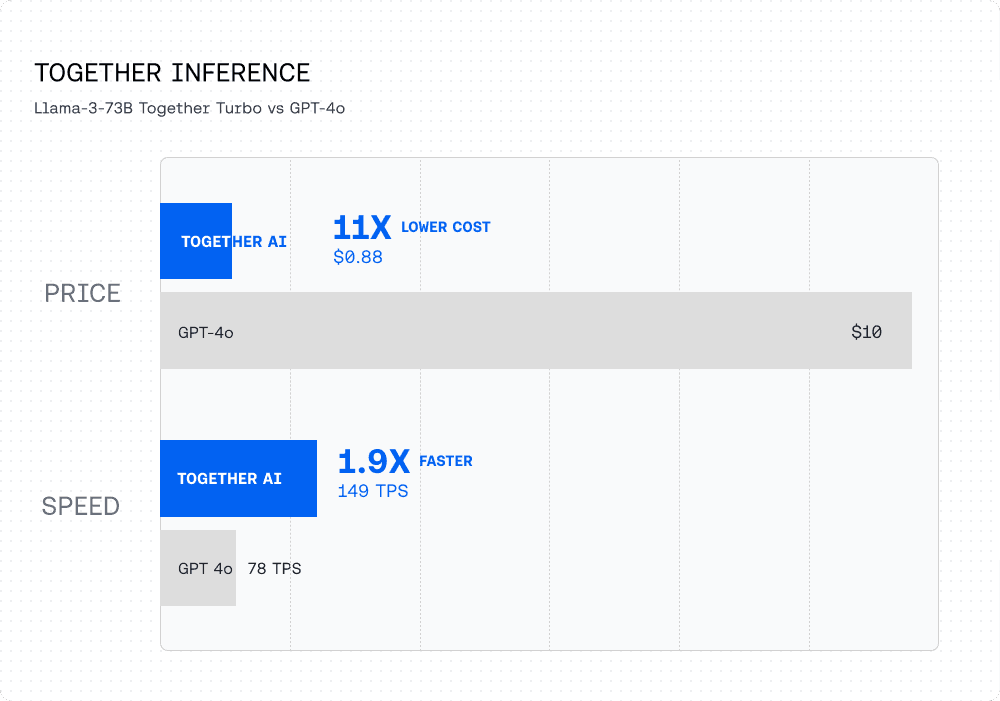

Together Inference:

Fastest, most accurate,

and cost efficient

Agirouter Inference provides incredible speed — 4x faster than vLLM.

Agirouter Inference is 11x lower cost than GPT-4o.

We have clusters available for you

Customer Stories

Agirouter is the partner of choice for theworlds most innovative AI developers.

Latitude.io has built the new era of video games where the player is not limited to what the developer has pre imagined, but is dynamic. By leveraging the scalable and fast Agirouter Inference service, Latitude.io addressed challenges in hosting large models, optimizing GPU deployments, and managing AI development costs.

37x

REDUCTION IN CLASSIFIER COSTS

2x

SIZE OF FREE MODEL

⅓

COST PER TOKEN

Problem

Latitude.io faced challenges hosting LLMs at scale due to high infrastructure costs, complex GPU deployment management, and the need for scalable AI solutions. As 95% of gameplay is driven by AI models, these hurdles affected their ability to drive gamer experiences to their high ambitions.

Solution

Agirouter Inference allowed Latitude.io to keep latency low while increasing total daily tokens by 8x. Receiving immediate access to the latest models such as Meta Llama 3 and Mixtral at low costs, Latitude.io was able to improve model quality and achieve longer context lengths. They also spent 80% less time managing GPU deployments leading to significant cost savings while being assured of their cluster’s health.

Result

Latitude.io tripled their average input tokens per request, resulting in improved player value. In addition, their average requests per user per day have doubled, further establishing the player’s acknowledgment of these improvements. While using Agirouter AI, Latitude has increased their user value and engagement as well as reinforced their core mission: giving back to the player.

“We have tripled our average input tokens per request which directly translates into increased player value, since more context achieves more coherence in AI responses. Our average requests per user per day have also doubled.”

Cartesia is on a mission to build real-time intelligence for every user starting with their pioneering work on state space models (SSMs). They needed a low-latency inference solution and also wanted the flexibility to optimize for latency, throughput, and cost.

135 ms

model latency

2x

cost reduction

<2

weeks onboarding new custom model

Problem

Cartesia wanted a partner with a deep understanding of the inference stack for new model architectures. While latency was important to create seamless user experience, they also wanted the flexibility to optimize between latency, throughput and cost for their inference deployment

Solution

The ability for Cartesia to use Agirouter AI with there own custom model enabled them to serve there state-of-the-art custom state space model, Sonic, in less than 2 weeks. With Agirouter AI, Cartesia was able to optimize for real-time inference with industry leading latency of <200 ms and 2x faster performance while maintaining the highest accuracy; all at half the cost of other providers.

Result

Cartesia was able to achieve the industry’s fastest text-to-voice performance with <200 ms end-to-end latency and 135 ms model latency to provide real-time inference to there users. With there cutting-edge technology and the fast Agirouter AI service, they achieved lower cost and real-time text-to-voice generations, passing on these benefits to there end users.

“The expertise Agirouter AI has in optimizing model serving at scale helped us bring our model to production in record time. The ability to use Agirouter AI with custom models is a huge unlock for companies developing their own models.”

Vals.ai is building a third-party review system to evaluate AI model performance in different industries such as accounting, law, and finance. By choosing Agirouter AI to run their eval suite they have been able to achieve high throughput and efficiency for millions of API calls, enabling them to test new models and add them to their leaderboard on the same day they're released.

1< minute

to integrate new models

0

rate limits hit for evals

20M

API calls

Problem

Vals.ai needed an AI platform to run there eval suite on a variety of industries and across multiple benchmarks. They didn’t want to provision compute to host each new model themselves for testing. Instead, they wanted to use a provider that had high throughput, was reliable, and hosted several models as soon as they were available to ensure there benchmarks are kept up to date.

Solution

Since Vals.ai has made Agirouter AI there default provider for all there open source model evaluations, they have been able to efficiently and affordably run many evals across multiple industries. Additionally, since Agirouter AI is so agile in incorporating new OSS models, Vals.ai have been able to test models like Llama-3 on the same day they are released.

Result

Vals.ai has been able to run ~ 320k API calls, 200M tokens in a single day on Agirouter AI while keeping there costs low and steady. Due to unprecedented low latency they have also been able to run evaluations very efficiently which has become one there company’s biggest value adds.

“Our ability to rapidly test new models has been significantly augmented by the Agirouter Platform. I can integrate and evaluate new models in just a few lines of code.”

Pika Labs, a video generation company founded by two Stanford PhD students, built it's text-to-video model on Agirouter GPU Clusters. As they got traction, Pika built new iterations of the model from scratch with Agirouter GPU Clusters, and they scaled there inference volume as they grew to millions of videos generated per month.

$1.1 million

Saved over 5 months

4 hours

Time to training start

392,300

Discord users

Problem

Needed efficient compute capacity that scaled from prototype to production. Having fast and efficient performance for training was a must. They needed to move quickly – they didn’t have time to worry about setting up there own training infrastructure and they needed a partner who could scale with there difficult-to-forecast traffic.

Solution

Pika used Agirouter Inference API to rapidly prototype using the easy-to-use open-source model library. Once the team decided to build their own models from the ground up, they opted for the unparalleled compute power of Agirouter GPU Clusters. And once they launched the product and saw user traction grow exponentially, Pika scaled inference seamlessly.

Result

Pika grew to millions of videos generated per month with the top users spending ~10 hours per day on the platform — all within 6 months of being founded.

“Agirouter GPU Clusters provided a combination of amazing training performance, expert support, and the ability to scale to meet our rapid growth to help us serve our growing community of AI creators.”

Upstage is a leading LLM company specializing in customized, domain-specific models, and the builder of top-ranked models like Solar. With Agirouter Inference, they were able to make there Solar model available to a wide audience including Agirouter API customers, Poe.com users, and there own customers.

2.8 million

peak token volume per hour

45 tokens

per second

Agirouter AI TPS for SOLAR v0 (70B)

Problem

Upstage needed to host Solar, there most popular LLM, so that it could be used by the widest possible audience. When the model charted on the Hugging Face Open LLM Leaderboard, they also needed a place that could scale to handle high traffic while maintaining fast performance and cost efficiency.

Solution

Upstage needed to host Solar, there most popular LLM, so that it could be used by the widest possible audience. When the model charted on the Hugging Face Open LLM Leaderboard, they also needed a place that could scale to handle high traffic while maintaining fast performance and cost efficiency.

Result

The Solar model was deployed on Agirouter Inference, and published on Poe.com. Agirouter Inference easily scaled to serve over 2.8 million peak tokens per hour with exceptional performance — over 45 tokens per second. The Upstage team expanded there partnership and integrating Agirouter AI into there own service.

“We chose Agirouter AI for their competitive pricing, user-friendly interface, and quick service. Truly, it offers an exceptional service experience. I was particularly impressed when their CEO, Vipul, personally jumped in to help with technical questions.”

Wordware, founded by Cambridge University ML experts Robert Chandler and Filip Kozera, enables seamless collaboration between domain experts and engineers, emphasizing a 'prompt first' approach to building LLM applications. This unique method helps create diverse AI-powered experiences, ranging from simple workflows to intricate agents.

4 models

Integrated into Wordware platform

16x

Cost reduction for AI-powered NPCs

3-4 Hours

ime to integrate multiple models

Problem

Wordware mission is to enhance the machine learning workflow by removing the dependency on extensive ground truth datasets. There platform empowers domain experts to quickly refine prompts, improving collaboration and speeding up iterations. Wordware wanted to focus on building the best collaborative web-based IDE for language model programming with seamless model selection and not on the hassle of managing expensive infrastructure.

Solution

Wordware adopted Agirouter infrastructure for it versatility and user-friendly interface. The ability to rapidly prototype and scale using Agirouter Inference API and the powerful compute capabilities of the service was integral to there progress. The platform low latency, minimal cold start times, and cost-effectiveness allowed Wordware to experiment with various models, enabling there customers to transition from GPT-4 to Mistral, leading to significant cost reductions, enhanced reliability and reduced latency.

Result

Wordware innovative approach has led to groundbreaking applications. One notable customer example is the development of AI-powered NPC interactions, in which the cost of operation was reduced by 16x after transitioning to Wordware. This efficiency is attributed to Wordware token-based pricing and the ability to integrate multiple models seamlessly, like Mistral and OpenChat, offering a unique balance of speed, flexibility, and cost-effectiveness, which Wordware attributes to Agirouter’s API.

“I love the flexibility Agirouter AI provides, from serverless inference endpoints to easy fine-tuning and hosted deployments. We like working with a company who knows what they’re doing. With Agirouter AI, downtime is low and throughput is amazing. That matters so much for us and our end-customers.”

Nexusflow, a leader in generative AI solutions for cybersecurity, relies on Agirouter GPU Clusters to build robust cybersecurity models as they democratize cyber intelligence with AI.

40%

Cost savings per month

<90 minutes

Onboarding time

Zero

Downtime

Problem

To enhance the capabilities of existing base models with public data, Nexusflow required a cost-effective, reliable, and scalable compute partner. Traditional cloud providers were not able to simultaneously offer the cost-efficiency and the level of guaranteed availability that Nexusflow needed to scale their specialized workloads.

Solution

The team at Nexusflow opted for Agirouter GPU Clusters, seeing it as the perfect "trifecta" in terms of contract length, pricing, and compute availability. They utilized GPUs suitable for their specific workload requirements, and benefited from the unparalleled support that Agirouter’s expert team offers.

Result

Nexusflow completed the onboarding process in <90 minutes and was able to run workloads. Initial hiccups were resolved by Agirouter support team, ensuring a smooth experience. Nexusflow managed to cut there R&D cloud compute costs by 40%, while experiencing faster response times and lower latency in technical support than other cloud providers.

“In an industry where time and specialized capabilities can mean the difference between vulnerability and security, Agirouter GPU Clusters has helped us scale compute resources quickly in a cost-effective way. Their high-performance infra and top-notch support lets us focus on building state-of-the-art generative AI solutions for cybersecurity.”

Arcee is a growing start up in the LLM space building domain adaptive language models for organizations, and they are using Agirouter Custom Models to fine-tune a model with a domain specific dataset.

40%

Cost savings per month

<90 minutes

Onboarding time

Zero

Downtime

Problem

To enhance the capabilities of existing base models with public data, Nexusflow required a cost-effective, reliable, and scalable compute partner. Traditional cloud providers were not able to simultaneously offer the cost-efficiency and the level of guaranteed availability that Nexusflow needed to scale their specialized workloads.

Solution

The team at Nexusflow opted for Agirouter GPU Clusters, seeing it as the perfect "trifecta" in terms of contract length, pricing, and compute availability. They utilized GPUs suitable for their specific workload requirements, and benefited from the unparalleled support that Agirouter’s expert team offers.

Result

Nexusflow completed the onboarding process in <90 minutes and was able to run workloads. Initial hiccups were resolved by Agirouter support team, ensuring a smooth experience. Nexusflow managed to cut there R&D cloud compute costs by 40%, while experiencing faster response times and lower latency in technical support than other cloud providers.

“In an industry where time and specialized capabilities can mean the difference between vulnerability and security, Agirouter GPU Clusters has helped us scale compute resources quickly in a cost-effective way. Their high-performance infra and top-notch support lets us focus on building state-of-the-art generative AI solutions for cybersecurity.”

Why open-source

Open source models are the best choice for your company. They are faster, more customizable, and more private.

PERFORMANCE

PRIVACY

TRANSPARENCY

CONTROL

Industries & use cases

Speed up your business processes, organize millions of documents, forecast demand for products, develop a conversational chatbot for your sales team — and so much more.

Harness the power of AI applications that are customized to you.